Related Blogs

One of the major challenges for any IT organization is to simplify tasks and speed up the project delivery process. Modern applications are gaining immense demand and are continuously expanding to accommodate a large amount of data rendered by devices and end-users. This demands software development companies to constantly get affected in new deployment environments to keep the applications running smoothly without any errors. But unfortunately, manual provisioning and infrastructure configuration are less efficient and slow. This is where Infrastructure automation comes into the picture and helps IT organizations to overcome such challenges and simplify operations while enhancing efficiency and speed by enabling software teams to perform all the critical management tasks with minimal human intervention.

Before we proceed further, let us first know what exactly Infrastructure automation means and why it is important.

1. What is Infrastructure Automation?

The process of Infrastructure automation reduces the requirement for human interaction with IT systems as it creates functions that can be used by other software or on command. Having an automated infrastructure allows repeatability so that developers can quickly set up environments where a particular environment consists of a single objective: user acceptance testing, integration development, or production. So, contemporary architectures, like microservices and monolithic architecture, majorly benefit from infrastructure-level automation.

This concept can be instilled to make processes automated and easily control IT elements including storage, development, servers, operating network, network elements, and more. The main aim of this is to improve the efficiency of an organization’s IT operations and staff.

Amazon ECR, Docker hub, JFrog Container registries, and many more tools are useful part of Infrastructure automation.

2. Why is It Important?

The majority of IT organizations face issues when it comes to growing infrastructure complexity and size. With limited time and resources, every IT team often struggles in keeping pace with the growth, and this results in delayed updates, resource delivery, and patching. For this, if automation is applied to common and repetitive tasks such as configuring, provisioning, deploying, and decommissioning processes, it can easily simplify the scale of all the operations by enabling businesses to regain control over and visibility into their infrastructure.

Infrastructure automation is a process that comes with a lot of benefits, and some of those are –

2.1 Provisioning

Infrastructure automation is an approach that can help organizations reduce provisioning time for new networking & VMs from weeks to minutes. And this process is very much valuable in recent times’ multi-cloud hybrid IT environments as nowadays orchestration and automation work hand in hand to ensure smooth business operations and product deployment instead of workload placement.

2.2 Capacity Planning

Another advantage of applying infrastructure automation in an IT firm is that it helps in under-and over-provisioning performance organization-wide. This means that in every organization, some kind of waste can occur due to a lack of professional standards in workloads or project deployment. In such cases, infrastructure automation can help in reducing inconsistencies by eliminating the complexity and increasing the standardization of processes in the organization. Applying infrastructure automation can help in identifying the incorrect provisioning areas that are impacting workload deployments and resource allocation processes.

2.3 Cost

The person who is managing an IT budget keeps a compulsive eye on every cent going out. But what if you lose visibility on how much cost is hitting your budget and where it’s going? This problem relates to cloud resource consumption where an Environment as a Service infrastructure automation tool having features like Torque, can help manage and eliminate runaway costs.

2.4 Reduce Human Error

Automating Infrastructure reduces vulnerabilities associated with human error during manual provisioning and allows you to focus on core development rather than investing efforts in repetitive processes.

2.5 Business Risk

Some amount of business risk comes from security deficiencies. It is hard to maintain security and compliance at the same time as it requires a lot of time and effort, is prone to error, and standards can change over time. This is where your blueprint environment is helpful. With this, IT teams can make sure that software developers spun up the cloud environments who need them.

2.6 Improving Workflows

Infrastructure Automation allows for repeatability and accuracy while performing IT provisioning processes. Operations teams are required to set the expected requirements for the provisioning of infrastructure and automation tools and execute the tasks when those conditions are met.

2.7 Inability to Scale

Several companies find it hard to scale for some reason. Bottlenecks in environment provisioning can lead to such problems, but it is also caused by fragile toolchains resulting from disparate tools that are being used by each pipeline. This is where infrastructure automation helps IT teams to simplify and improve DevOps toolchains and reduce the burden of troubleshooting with built-in troubleshooting and validation features.

2.8 Several Other Benefits Include

- Modifications to the system are common, without any stress for IT staff members or users, the system can do this.

- Users have the ability to manage, define, and provision the resources that they need, without needing IT staff to do it for them.

- IT infrastructure allows you to change rather than being a constraint or an obstacle.

- IT staff invest a huge amount of time in valuable things that engage their abilities to perform repetitive tasks.

- Solutions to problems are proven through testing and implementing them, rather than by only discussing them in documents and meetings.

3. Which IT Infrastructure Processes can be Automated?

Here are the IT infrastructure processes that can be automated for tech companies –

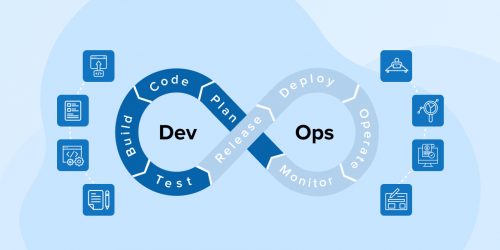

| DevOps for Infrastructure | DevOps helps in infrastructure automation which enables the operations team and developers to monitor, manage, and facilitate the resources automatically. These tools support, automate, and orchestrate Infrastructure as Code (IaC) rather than manual processes. |

| Self-Service Cloud | Self-service clouds can be automated by turning data centers into public or private cloud infrastructure and evolving them as per the providers and the organization’s objectives. |

| System Maintenance | System maintenance Automation helps IT teams manage complex and large IT infrastructures with their existing staff and free you from tedious operations. IT teams struggle a lot to preserve growing responsibilities with their current staffing levels. Automation helps those IT teams to manage complex and large IT infrastructures with their existing staff and allow them to concentrate on more rewarding and strategic projects. |

| Multi-Cloud Automation | Multi-cloud automation tools help companies increase their self-service automation for many different types of public cloud platforms. This includes platforms like Microsoft Azure, Amazon Web Services, and Google Cloud Platforms. |

| Network Automation | Network Automation is an IT infrastructure automation process that automates the company’s deployment services, offers networking facilities for security, and enables full life-cycle automation of applications. |

| Configuration | Your IT infrastructure has a wide variety of software and hardware that manages everything but might lead to higher maintenance costs and the inability to meet strict service-level agreements. But automation gives predictable and repeatable processes through which you can easily manage configurations across various operating systems and enhance consistency, increase uptime, and speed changes. |

| Cluster Automation | Infrastructure automation comes with tools that help clusters of any IT infrastructure handle their functions automatically. It also manages and supports the cluster’s downtime and offers higher user availability. |

4. Challenges in Implementing Infrastructure Automation

As we all know, the demand for automated infrastructure management is increasing as it is now becoming a necessity for every organization. With time, more and more IT organizations have started adopting new techniques and they’re getting used to them but this doesn’t mean they don’t face any challenges instead they started facing more challenges.

Basically, the introduction of new technologies in any organization for higher productivity and smoother functioning sometimes does not make performance better. And this has led to slower processing as the new technology requires time to optimize, understand, and configure the IT infrastructure. Besides this, there are other issues that companies face while trying to adopt infrastructure automation and they are –

4.1 Outdated Infrastructure

When it comes to working with the productive concepts or following practices of the latest technologies like DevOps, automation is the key. In such cases, an outdated infrastructure that has little automation or no self-service can never be efficient. The reason behind it is that infrastructure automation requires constant upgrades and improvements to avoid any type of challenges. For good automation, strategies take shape when an IT organization is equally reliable between pre-production and real environments, the firm is resilient to failure, and has quick access to its processes.

4.2 Culture

Every IT organization requires a new culture but for that learning and disruption are important requirements. This means that when the employees of the organization are not in the right mindset that it can lead to project failure. The main reason behind it is that the process of optimizing infrastructures can slow down the operational processes and development if the mindset of the entire team is not the same. This means that adapting to advanced and new technology is very difficult for employees who don’t have the right technical background and mindset for it.

Therefore, organizations first need to resolve that issue and help employees enhance their skills before adopting automation which comes with the latest technologies.

4.3 Tools and Apps

One of the major challenges faced by IT organizations when it comes to automation infrastructure is modifying an application. It requires you to invest a lot of time and effort to make changes to an application as it is a challenging task and the reason is the gap between software developers and business users. In certain cases, users are not aware of the complexity that comes with the back-end integration process and they consider that a single app helps them to overcome all their problems. On the contrary, developers don’t follow a systematic view of the business and due to this reason they might fail to understand why a quick-fix tool won’t perform. But if the developers and users tend to use an unauthorized tool, it can make the situation even worse and the process more complex.

4.4 Communication and Processes

Infrastructure automation technology such as DevOps is all about other departments working with each other simultaneously. This means that in an organization, all the team members must be on the same page. Besides this, provisioning the resources can also take a lot of time when the communications are outdated and the test processes are manually built.

4.5 Budgeting

The last challenge that companies face while implementing infrastructure automation is budgeting. In any organization, capital and operational expenses are always interconnected. When companies adopt automation, they begin to scale up their infrastructure which adds expenses to the existing infrastructure which can not be quite budget-friendly for all the companies.

5. How Does Infrastructure Automation Work in Any Organization?

Infrastructure automation helps organizations to deliver repeatability and predictability to the processes that are used for handling the IT workload configuration. It enables the IT department to meet their service level agreements (SLAs) by freeing up valuable IT resources and reducing complexity. This helps organizations focus on business value rather than low-level infrastructure management. And this can eventually help companies to increase uptime and accelerate the consistency in the deployment of new workloads.

Besides this, as infrastructures grow in any organization, automation enables the teams of the company to manage complex environments with the existing staff. This means that infrastructure automation streamlines ongoing operations like user access management, network management, storage administration, IMAC (install, move, add, change) of workloads, data administration, troubleshooting, deploying application workloads, and debugging. In addition to this, infrastructure automation is a concept that enables businesses to take advantage of multi-cloud provisioning and self-service with consistency across different clouds.

There are 3 main phases of infrastructure automation, that are:

5.1 Adopt and Establish a Provisioning Workflow

Manually provisioning and updating infrastructure more than once a day from different sources using a variety of workflows is like inviting chaos. At times, teams might face difficulty while sharing a view of the organization’s infrastructure and collaborating. So to overcome this problem, businesses must adopt and establish infrastructure provisioning workflows that are consistent for any cloud, private data center, or service.

5.2 Operate at Scale and Optimize

Just having a standardized workflow is not enough, businesses must continuously optimize their infrastructure and operate at scale to reap the benefits of infrastructure automation. For this, you need to extend automated and self-service infrastructure provisioning to programmers with proper policies in place to remediate policy violations.

5.3 Standardize the Workflow

To standardize the provisioning workflow across your organization, make sure that it offers all the required security and maximizes efficiency. Years ago, we used a ticket-based approach to infrastructure provisioning making IT a gatekeeper. That time they act as infrastructure governors but also create bottlenecks and restrict developer productivity. So to avoid this, organizations must have a standardized workflow that reduces redundant work and includes proper guardrails for operational consistency and security.

6. Well-Known Infrastructure Automation Tools

Here are the top infrastructure automation tools that can be used by companies to automate their infrastructure and smoothen day-to-day business.

6.1 AWS CloudFormation

AWS CloudFormation is known as the best cloud-based infrastructure automation tool. This tool enables businesses to make their working model easy & smooth. Besides this, it also helps in setting up the AWS resources with the use of infrastructure as code. This tool helps in monitoring and managing resources & applications on AWS which helps in saving time and effort. It also provisions and configures resources with the use of templates that helps in creating, updating, and deleting stacks.

If you’re using AWS CloudFormation to build infrastructure as code make sure to consider some factors such as:

- To ensure the AWS CloudFormation templates are working as expected, test them periodically.

- Automate AWS CloudFormation testing with TaskCat.

- Create modular templates.

- Use the same names for common parameters.

- Utilize existing repositories as submodules.

- Make use of parameters to identify paths to your external assets.

- Use an integrated development environment with linting.

Basically, AWS CloudFormation enables IT organizations to scale their infrastructures globally. It also meets compliance, safety, and configuration regulations for all AWS regions and accounts.

Pros:

- While deploying new resources, you can easily apply changes to your current resources with AWS CloudFormation.

- AWS CloudFormation helps to improve the overall security of the AWS environment by eliminating the risk of human errors that the cloud turns into breaches.

- You can easily apply the same configuration repeatedly using CloudFormation templates to define and deploy AWS resources.

- While creating an AWS CloudFormation template to manage AWS resources, you can deploy multiple instances of the same resources using a single template.

- AWS CloudFormation allows you to track changes without looking through logs.

Cons:

- AWS CloudFormation might not work well sometimes for developers as there is a size limit of 51MB on the stacks.

- Nested stacks are difficult to manage and implement in the AWS Cloud environment.

- The modularization in cloud formation is not mature as compared to other similar tools.

6.2 AWS Pipelines

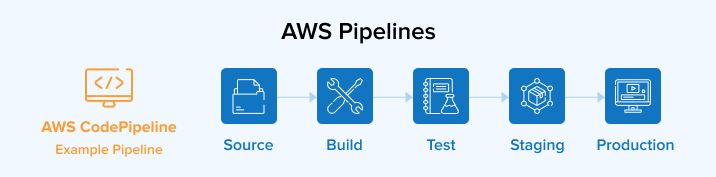

AWS CodePipeline is one of the most popular infrastructure automation solutions in the market that enables IT organizations to reliably process and move data between various AWS storage services and compute alongside on-premises data sources.

How does AWS CodePipeline work?

AWS CodePipeline is a fully managed continuous delivery service used to determine the data flow between two or more data sources. It allows you to automate your release pipelines for more reliable application and infrastructure updates.

Have a look at some best practices for CodePipeline resources:

- Try using authentication and encryption for the source repositories that allow you to connect to your pipelines.

- You can also integrate code pipelines with third-party tools such as GitHub and Jenkins and then use them efficiently.

- You can use logging features available in AWS to track user actions.

- Install Jenkins on the Amazon EC2 instance and activate a separate EC2 profile.

Pros:

- You can bring your own build tools and programming runtimes.

- It is not required to pay for idle build server capacity.

- No need to set up, update, and manage software and build servers.

- It allows you to rapidly release new features and automates your software release process.

- It is easy to transform the data and automate the moments.

Cons:

- The overall usability and console UI is poor.

- It has to be composed of multiple AWS services which makes it extremely complex and overly dependent on AWS.

- Your infrastructure is entirely dependent on AWS.

6.3 Azure DevOps

Azure DevOps comes with a support for collaborative culture. It offers a set of processes that has the capability to bring together project managers, developers, and contributors to create software. It also enables IT organizations to develop and improve products at a faster rate in comparison to traditional software development approaches. In addition to this, with the help of Azure DevOps, organizations working on the cloud or on-premises can make the process smooth as Azure DevOps offers integrated features such as:

Azure Boards: It delivers value to your users using proven agile tools to discuss, plan, and track work across various teams.

Pipelines: You can easily build, test, and deploy applications with CI/CD that work with any language, platform, and cloud as well as Azure pipelines allow you to connect to GitHub and deploy continuously.

Kubernetes Service: It helps you to ship containerized applications faster and operate them easily using a fully managed Kubernetes service.

Monitor: Using Azure monitor, you’ll get full observability into your network, applications, and infrastructure.

Azure Test Plans: You can effectively ship and test with an exploratory test toolkit.

Pros:

- It allows you to run containerized web applications on various operating systems such as Linux and Windows.

- It offers excellent features such as reduced complexity, better product delivery, faster issue resolutions, and much more.

- Azure DevOps allows you to use automation so that you can build faster and more efficiently.

- It makes sure that the application quality is maintained and reliably delivered at a more rapid pace.

- Manage and operate your infrastructure efficiently with reduced risks.

Cons:

- As a complex technology, the learning curve of Azure DevOps is steep.

- The infrastructure cost is expensive for a DevOps environment.

- It comes with unrealistic goals and added complexity.

6.4 Jenkins

Jenkins is a leading open-source automation server that offers numerous plugins to support building, deploying, and automating any project. If an organization wants to use this tool, it needs to be associated with a version control system such as SVN or GitHub. This means that when there is a new code to be pushed in the repository, this server will be used to create and test the code and notify the IT team about the changes made or the result received.

Pros:

- As an open-source tool, it can be freely and easily installed.

- It offers 100+ plugins which ease your task and it also allows you to share it with the community.

- It is built with Java, so it can be easily portable to all major platforms such as Windows UNIX, and MacOS which is great.

- Enhances the user interface by incorporating user inputs.

- It can easily distribute work across various machines for faster development, testing, and deployment.

Cons:

- Jenkins uses a single server architecture which limits the usage of resources and causes performance issues.

- There is a lack of federation which creates a large number of standalone Jenkins servers that are hard to manage.

- Jenkins plugins have dependencies that increase the management burden.

6.5 GitHub Actions

Another popular infrastructure automation tool is GitHub Actions. This is a really useful action to use along with other actions that add or modify files to your repositories. This tool offers a great way to set up an organization’s continuous integration pipelines. This means that with the help of GitHub Actions, different types of integrations and workflows are available that can help in setting up a CI/CD pipeline. This tool can also be useful on enterprise and public GitHub accounts. In addition, this is a concept where the GitHub runners enable the organizations to set up a CI execution environment. GitHub Actions allows developers to automate their workflows across various problems, pull requests, and more. It brings automation directly into the SDLC on GitHub through event-driven triggers.

Pros:

- It has the ability to implement automations right in your repository that you can easily integrate to your preferred tools.

- It supports various operating systems such as MacOS, Windows, and Ubuntu Linux so that it becomes easy for you to build, test, and deploy code directly to the OS of your choice.

- GitHub Actions brings CI/CD directly to the GitHub flow and allows developers to create their own custom CI/CD workflows.

- Easily build and test code as well as automate build and test workflows.

- You can freely use all public repositories.

Cons:

- GitHub actions are hard to implement and debug.

- You cannot run extremely heavy jobs on GitHub actions because its maximum execution time per job is 6 hours.

- It does not provide proper and efficient testing services.

6.6 Docker

Docker is a tool that works on the process-level virtualization approach which helps in creating isolated environments for applications known as containers. These containers are shipped to a different server without any modification to the application and this is why Docker is considered the next step in virtualization. In addition to this, Docker comes with a huge developer community, and with time it is gaining popularity among DevOps developers in cloud computing.

Pros:

- Docker environment is highly secure and isolated from each other, making a clean app removal.

- The cost is optimized as it allows you to significantly reduce infrastructure costs.

- Developers can run applications in a consistent environment from design and development to support and maintenance.

- Developers have the ability to create containers for every process and deploy them instantly.

- Docker is capable of increasing the speed and efficiency of the CL/CD pipeline and increasing productivity.

Cons:

- Large amount of containers in Docker requires a lot of time to process which makes the process time-consuming.

- Docker is not suitable for applications that require a rich graphics user interface.

- There is no cross-platform compatibility.

6.7 Kubernetes Operators

Kubernetes is a popular container orchestration tool that enables organizations to automate and manage the web application with the use of custom user-defined logic. It is specially designed for automation and can be used with the GitOps methodologies to take complete benefit of the automated Kubernetes deployments. Those who tend to run workloads on Kubernetes might use automation to take care of repeatable tasks.

Pros:

- It is simple, flexible and has the ability to automate many functions simultaneously.

- Operators allow you to use custom resources to manage applications and their components.

- It offers primitives and basic commands that operators can use to define complex actions.

- Kubernetes operations provide more flexibility and ensure scalability.

- Kubernetes services provides load balancing to simplify the process of managing containers on multiple hosts.

Cons:

- There are constant innovations and numerous additions which makes the landscape confusing for new users.

- It is slow, complicating, and difficult to manage.

- It has a steep learning curve.

6.8 Terraform

Terraform is one of the most popular cloud-agnostic infrastructure provisioning tools. It is a tool that is written in Go and is created by Hashicorp. This tool supports all private and public cloud infrastructure provisioning. It works well when it comes to maintaining the state of any organization’s infrastructure as it uses the concept called state files. Automation of Terraform comes in various forms and to varying degrees.

Pros:

- Terraform allows you to store local variables like passwords and cloud tokens.

- You can edit, build, and version your infrastructure using various coding techniques.

- Easily define your application and activate configuration files.

- Support various cloud solutions.

- You can easily find providers and modules to use with a private registry.

Cons:

- You cannot use revert function or incorrect changes to the resources.

- Security and collaboration features are costly and only included in the expensive enterprise plans.

- It does not support plans using Terraform’s Remote State.

6.9 Ansible

Ansible is an agentless configuration management and orchestration tool. The configuration modules in this tool are known as Playbooks which are written in YAML format. These modules are easy to write in comparison to other configuration management tools available in the market.

Pros:

- No technical skills are required to easily set up and use Ansible.

- You can orchestrate the entire software environment no matter on which platform it is deployed.

- You can easily automate the implementation of applications that are internally generated to your production programs.

- Automates a variety of devices and systems such as databases, firewalls, networks, and much more.

- It allows you to model complex IT workflows and create infrastructure components easily.

Cons:

- Ansible simplifies sequential tasks and does not track dependencies.

- It comes with a lot of issues related to performance, debugging, control flow, and complex data structure.

- Insufficient user interface and lack of notion of state.

7. Conclusion

As seen in this blog, infrastructure automation tools are really important for IT orchestration, efficiency, and an organization’s digital transformation as well as it is highly required in IT organizations to enable teams to manage tasks easily and efficiently. But when any IT organization decides to implement infrastructure automation, it is very important to select the right set of tools. Those tools can help automate monotonous tasks and enhance the efficiency level of the team members.

If you’re still confused about choosing the right infrastructure tools then make sure to consider some major factors such as skillset, cost, usage, and functionality. It is not necessary that one tool can fit all the requirements of your organization. Sometimes you may need multiple tools to fulfill your requirements. So the selection of tool sets must be as per the team’s requirements so that it can effectively ship and test with an exploratory test toolkit.

Vishal Shah

Vishal Shah has an extensive understanding of multiple application development frameworks and holds an upper hand with newer trends in order to strive and thrive in the dynamic market. He has nurtured his managerial growth in both technical and business aspects and gives his expertise through his blog posts.

Subscribe to our Newsletter

Signup for our newsletter and join 2700+ global business executives and technology experts to receive handpicked industry insights and latest news

Build your Team

Want to Hire Skilled Developers?

Managing technical tasks is a bit tough. All thanks to infrastructure automation for solving those barriers. Even this article mentioned all the things that we should be aware of to simplify our development task and lessen the time to complete it.