ML Kit is a mobile SDK that is provided by Google’s machine learning expertise for Android and iOS apps. By utilizing Google ML in mobile application development software the app developed is more attractive, personalized and robust.

APIs: Build machine learning features into your apps

- Image Labeling – Identify objects, location places, activities, products etc.

- Text Recognition (OCR) – Recognize and read text from images

- Face Detection – Detect faces and facial landmarks

- Barcode Scanning – Scan barcodes

- Landmark Detection – Identify well known and popular landmarks in an image

1. Image Labelling

It gives an insight into the content data of images. When by using the API, you get a list of the entities that were identified: people, things, places, activities, and so on. API provides score count for each label that shows the confidence. One can perform tasks such as metadata generation and content moderation.

There are 2 sub-types of this API – First is the On Device API which runs this labelling on the device itself. It is freely available and it covers 400+ different labels in the image. Second is the Cloud API which runs on Google Cloud and covers 10,000+ different labels. It’s paid but the first 1,000 requests per month are free.

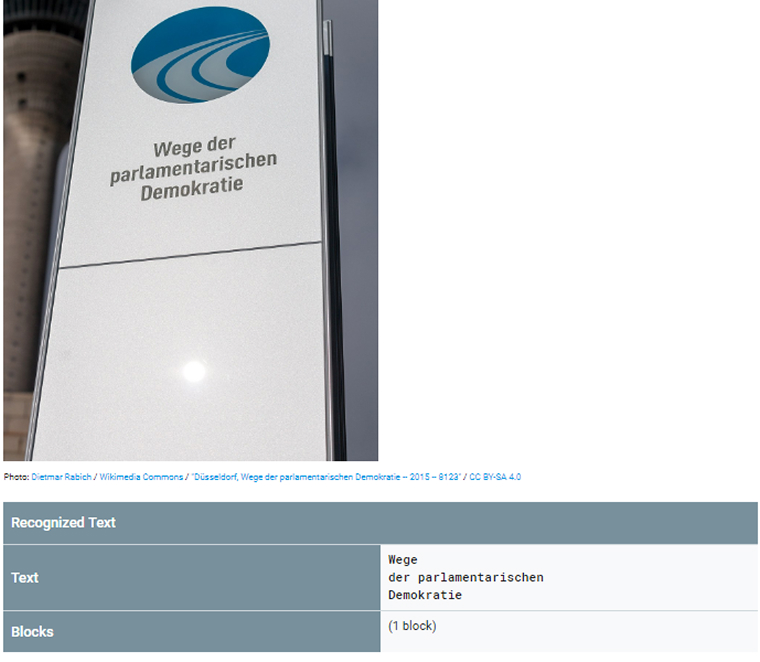

2. Text Recognition

It can automate entry for credit cards, receipts, and business cards, or help organize photos. With the Cloud-based API, you can extract and read text from documents, which you can use to increase accessibility or translate documents in mobile application.

Cloud Vision API recognize multiple languages within a single image. Some Application recognizes text from real-world objects like reading train number from the train.

3. Face Detection

It draws boxes on around faces its basic feature now deep features comes with ML which detects the person is smiling or not, their eyes open or not, etc. Face Detection finds all landmarks and classifications in the face. A landmark is a part of the face like the right cheek, left cheek, and base of the nose, eyebrow, and more, each of the detection can be implemented in custom mobile application development.

A classification is a kind of like an event to detect. Currently, ML Kit Vision can detect only if the eyes are open or not and if the person is smiling. ML Kit performs detection on real-time also like video chat or another app which shows the user’s expression.

Below some of the main points that we use in discussing the face detection feature of the ML Kit:

The following features are developed for face tracking-

- Extends from face detection to video sequences.

- User can be tracked any face appearing in a video for any length of time.

- That is, faces that are detected in consecutive video frames can be identified as being the same person. The mechanism works based on the position and motion of the face in the video sequence.

As described in ML Kit find the landmarks like left eye, right eye, and base of the nose. A landmark is a point of interest within a face.

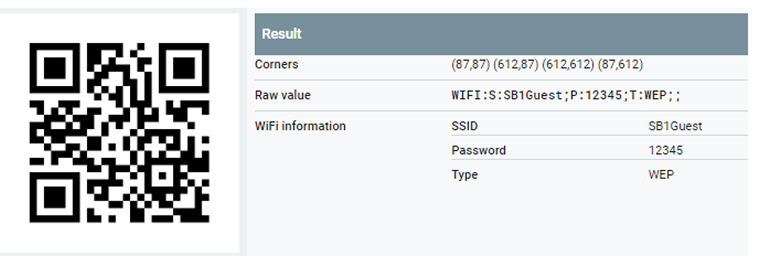

4. Barcodes

Barcodes are a new traditional way to pass your private or personal information passing. Nowadays user contact details, Wi-Fi network credentials encode the data and pass using the barcode and application real-time read that barcode and respond intelligently using ML Kit can automatically recognize.

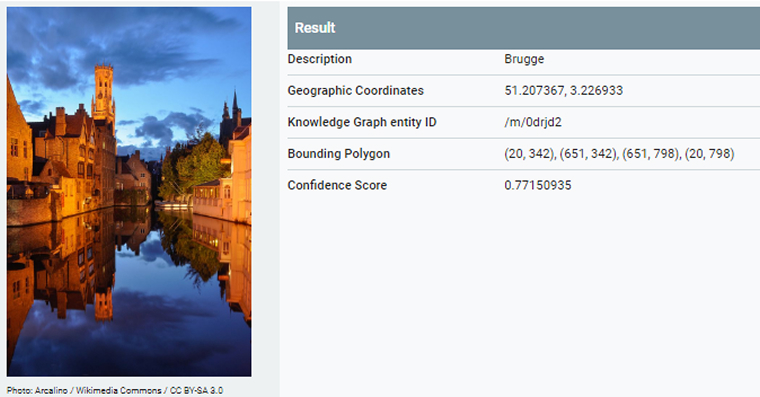

5. Landmark Recognition

You can recognize well-known landmarks in an image. API fetching images data and recognize the landmark from the image. Landmark’s geographic coordinates and the region also found in API. Using Vision API references a database of over 70,000 landmarks.

It’s most popular public photos and each row in this database includes the name, location and Knowledge Graph mid of each landmark. Database of landmark continuously updated to include new landmarks.

ML Kit’s predefined models don’t satisfy with your needs, you can use a custom TensorFlow Lite model with ML Kit. TensorFlow Lite uses many techniques for achieving low latency such as optimizing the OS for mobile app development and quantized OS that allow smaller and faster (fixed-point math) models.

As published by Google they are also working with a feature which enables developers to upload a full TensorFlow model, along with training data, and receive in return a compressed TensorFlow Lite model. Currently, Google is still working to test the custom TensorFlow model feature.

Comments

Leave a message...